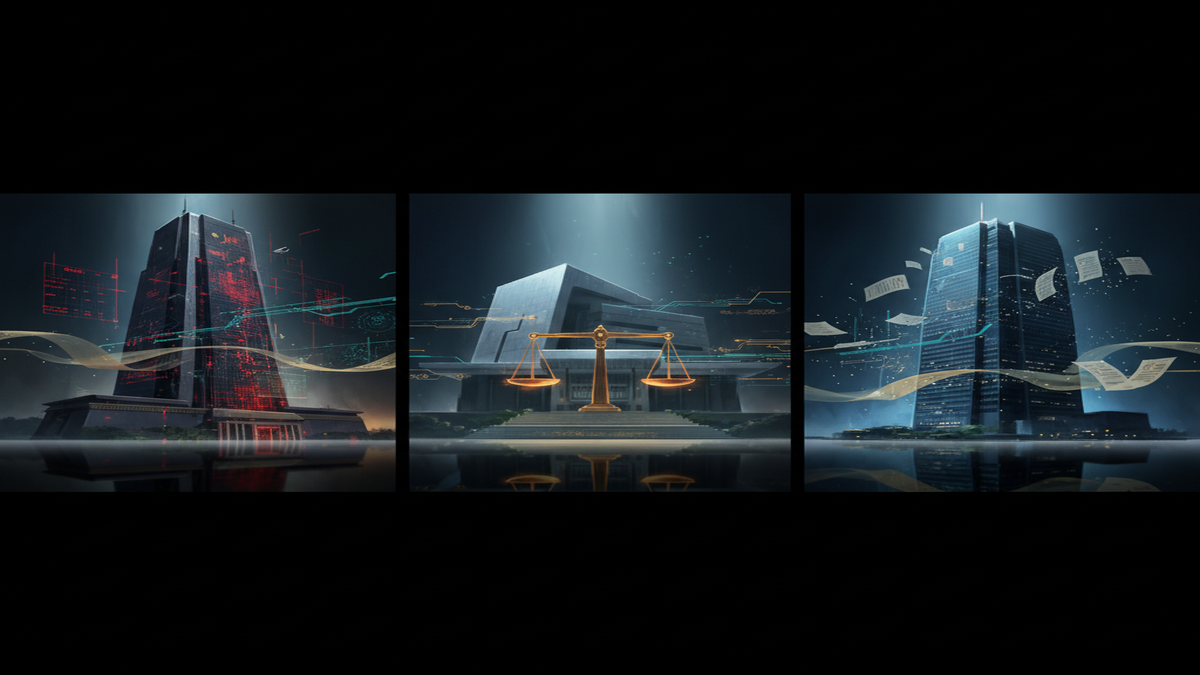

Three Laws of AI: How China, Japan, and South Korea Are Writing Very Different Rulebooks

China enforces binding sector-specific AI rules backed by service shutdowns. South Korea just activated a sweeping framework law with fines and risk classifications. Japan bets entirely on voluntary guidelines. Three neighbouring economies, three radically different philosophies, and a growing compliance headache for any business operating across East Asia.

# Three Laws of AI: How China, Japan, and South Korea Are Writing Very Different Rulebooks

Three neighbouring economies. Three radically different bets on how to govern artificial intelligence. As the rest of the world watches Europe's AI Act take shape and Washington dither over federal legislation, **East Asia** is quietly running the planet's most consequential policy experiment: what happens when countries with deeply intertwined supply chains, overlapping talent pools, and competing strategic ambitions each choose a fundamentally different regulatory philosophy?

**China** has built a thicket of binding, sector-specific rules enforced by powerful regulators. **South Korea** has just switched on a sweeping new framework law that tries to balance innovation with hard compliance. And **Japan** is wagering that voluntary guidelines, industry self-regulation, and gentle government nudges will be enough to keep its AI ecosystem both competitive and safe.

The stakes are enormous. These three economies collectively represent the majority of Asia's AI market, which exceeded US$83 billion in 2025, according to Fortune Business Insights. The regulatory paths they choose will shape not only their own industries but set precedents that ripple across Southeast Asia, India, and beyond. As we explored in our analysis of how [Asia's AI regulation rift is already costing billions](/policy/asia-ai-regulation-splintering-compliance-costs-billions-2026), fragmentation carries real economic consequences.

Here is how the three approaches compare, where they clash, and what it all means for businesses operating across the region.

---

## The Regulation Matrix: Three Philosophies at a Glance

| Dimension | China | South Korea | Japan |

|---|---|---|---|

| **Core philosophy** | State-led control and security | Framework law balancing innovation and trust | Innovation-first soft law |

| **Primary legislation** | Sector-specific rules (Generative AI Measures, amended Cybersecurity Law, Content Labeling Measures) | AI Basic Act (effective 22 January 2026) | AI Promotion Act (non-binding) + AI Guidelines for Business |

| **Binding force** | Fully binding with enforcement actions | Binding with phased enforcement | Voluntary; relies on existing laws (APPI, Copyright Act) |

| **Scope** | All AI services operating in China; extra focus on generative AI and content | High-impact AI, high-performance AI (>10²⁶ FLOPs), generative AI | All AI actors (developers, providers, users); no mandatory obligations |

| **Generative AI rules** | Mandatory content labeling, watermarking, LLM security filings | Mandatory labeling and watermarking of AI-generated content | Voluntary disclosure recommended |

| **Penalties** | Service suspension, fines, criminal liability | Fines up to KRW 30 million (~US$21,000); potential imprisonment | None (soft law); existing laws apply for specific harms |

| **Risk classification** | Implicit (high-risk sectors like deepfakes, recommendation algorithms) | Explicit: high-impact AI and high-performance AI tiers | None; risk-based approach recommended but not mandated |

| **Lead regulator** | Cyberspace Administration of China (CAC) | Ministry of Science and ICT (MSIT), AI Strategy Council | No single regulator; METI, MIC, Digital Agency coordinate |

| **International alignment** | Distinct Chinese framework; contributes to UN and bilateral forums | Aligned broadly with EU AI Act concepts | Aligned with G7 Hiroshima AI Process; OECD principles |

---

## Binding Rules vs. Soft Power: The Philosophical Divide

The most striking difference is not in the details but in the underlying theory of governance.

China's approach starts from a premise of **state authority and content control**. Since 2023, Beijing has rolled out a succession of targeted regulations covering algorithmic recommendation, deepfake synthesis, generative AI services, and, most recently, mandatory labelling of all AI-generated content. The Cyberspace Administration's March 2025 Measures for Labelling AI-Generated Synthesised Content require every online platform to embed visible watermarks and invisible metadata tags in AI-created text, images, audio, and video. Platforms that fail to comply face service suspension; in July 2024, two AI companies were ordered offline for failing to complete mandatory security assessments and large language model filings.

The amended **Cybersecurity Law**, which took effect on 1 January 2026, marked another escalation. For the first time, it introduced dedicated AI compliance provisions alongside its existing data-security framework, signalling that Beijing views AI governance as inseparable from its broader cybersecurity architecture.

South Korea's **AI Basic Act** represents a different wager: a single, comprehensive law that attempts to cover the entire AI lifecycle in one legislative package. Effective since 22 January 2026, the Act defines two key categories of regulated systems. **High-impact AI** covers applications with significant consequences for human life, safety, or fundamental rights, including hiring decisions, loan assessments, healthcare, government operations, and biometric analysis for criminal investigations. **High-performance AI** targets frontier models trained with more than 10²⁶ floating-point operations.

Operators of these systems must conduct risk assessments, maintain explainability, implement human oversight, and notify users that AI is being used. For generative AI specifically, the law requires mandatory labelling and watermarking. Non-compliance carries fines of up to KRW 30 million (approximately US$21,000) and potential imprisonment for serious violations, though [South Korea's broader push to commercialise AI](/business/south-korea-ax-sprint-ai-commercialisation) suggests enforcement will initially favour guidance over punishment.

Japan stands apart. Rather than legislating new obligations, Tokyo has opted for what scholars call **"agile governance"**: a philosophy built on voluntary guidelines, multi-stakeholder coordination, and iterative improvement through plan-do-check-act cycles. The AI Promotion Act, Japan's primary AI statute, is deliberately non-binding. It defines AI broadly, positions it as a strategic national asset, and outlines four guiding principles, but it creates no enforceable requirements and establishes no dedicated regulator.

The operational weight instead falls on the **AI Guidelines for Business**, released jointly by the Ministry of Economy, Trade and Industry (METI) and the Ministry of Internal Affairs and Communications (MIC) in April 2024 and updated in March 2025. These guidelines articulate ten cross-sector principles, from fairness and privacy to accountability and education, and include checklists for developers, providers, and users. But compliance is entirely voluntary.

> "Instead of rigid regulation, Japan relies on the non-binding Act on the Promotion of Research, the 2024 AI Business Operator Guidelines, and guidance on the interpretation of existing statutes." — International Bar Association, Japan AI Governance Analysis

---

## Where It Gets Complicated: Cross-Border Business Impact

For multinational companies operating across East Asia, the regulatory divergence creates a compliance puzzle with no easy solution.

A generative AI platform launching in all three markets must navigate China's mandatory security assessments and content-labelling regime, obtain South Korea's risk-assessment approvals and implement its watermarking requirements, and voluntarily adopt Japan's best-practice guidelines, all while maintaining a single product that meets three different philosophical standards. As our coverage of [Asia's AI privacy rules getting expensive](/policy/asia-ai-data-privacy-regulation-compliance-costs-2026) detailed, the cost of multi-jurisdictional compliance is already running into hundreds of millions of dollars for the largest technology firms.

The divergence is especially acute around **content labelling**. China demands both visible and invisible markers on all AI-generated content. South Korea requires clear labelling and watermarking for generative AI outputs. Japan recommends, but does not require, disclosure. A company that builds a single content-generation pipeline must decide: does it apply the strictest standard (China's) universally, or does it create separate compliance stacks for each market?

Data governance adds another layer. China's amended Cybersecurity Law imposes strict data localisation and cross-border transfer requirements. South Korea's AI Basic Act works alongside existing data-protection statutes that impose their own constraints. Japan's Act on the Protection of Personal Information (APPI) is comparatively permissive but is undergoing its own AI-related interpretive updates.

The practical result is that **regulatory arbitrage** is becoming a real strategic consideration. Some AI startups are choosing to headquarter in Japan specifically because its lighter regulatory touch reduces time-to-market, while others are prioritising China's market access despite the heavier compliance burden. South Korea, sitting in the middle, is pitching its framework as a "balanced" alternative that gives businesses clearer rules without China's political-control elements.

---

## By The Numbers

**US$83.75 billion**: Asia-Pacific AI market size in 2025 (Fortune Business Insights)

**US$19.8 billion**: Japan's AI market in 2025, the largest confirmed national figure among the three (Grand View Research)

**US$98 billion**: China's planned AI investment for 2025, including US$56 billion in government spending (Fortune Business Insights)

**US$560 million**: South Korea's AX Sprint programme for AI commercialisation, announced March 2026

**KRW 30 million (~US$21,000)**: Maximum fine per violation under South Korea's AI Basic Act

**10²⁶ FLOPs**: The training-compute threshold that triggers South Korea's "high-performance AI" regulatory tier

**0**: Number of binding AI-specific laws in Japan; governance relies entirely on voluntary guidelines and existing statutes

---

## Scout View: Key Takeaways

**If you are in a hurry**, here is what matters:

China governs AI through a web of binding, sector-specific rules backed by real enforcement, including service shutdowns. South Korea has just activated a single comprehensive law that classifies AI systems by risk and imposes mandatory transparency, labelling, and oversight requirements with financial penalties. Japan alone among the three relies entirely on voluntary guidelines and existing law, betting that its "agile governance" model will preserve innovation without sacrificing safety.

For businesses, the bottom line is that operating across all three markets now requires three distinct compliance strategies. The days of a single Asia regulatory approach are over. [China's latest five-year plan makes AI the centrepiece of its economy](/news/china-five-year-plan-ai-economy), South Korea is spending heavily to commercialise AI within its new legal guardrails, and Japan is positioning itself as the region's most business-friendly AI jurisdiction, though critics warn that voluntary frameworks may struggle to address harms at scale.

---

## FAQ

**Which country has the strictest AI regulations?**

China, by a significant margin. Its regulations are fully binding, enforced by the powerful Cyberspace Administration, and have already resulted in AI companies being ordered to suspend services for non-compliance. The regime covers content labelling, algorithmic transparency, deepfake controls, and mandatory security assessments, all backed by real penalties including criminal liability.

**Is South Korea's AI Basic Act similar to the EU AI Act?**

There are structural parallels. Both use a risk-based classification system to apply stricter rules to higher-risk AI applications, and both impose transparency and labelling requirements on generative AI. However, South Korea's Act is narrower in scope, its penalties are significantly lower (KRW 30 million versus the EU's potential fines of up to 7% of global turnover), and enforcement is expected to be phased in gradually through 2027.

**Why hasn't Japan passed binding AI regulations?**

Japan's government has made a deliberate strategic choice. Officials and legal scholars argue that binding regulation would slow innovation in a market where Japan is already playing catch-up to China and the United States. The "agile governance" philosophy favours voluntary guidelines, multi-stakeholder coordination, and rapid iterative updates over the slower legislative process. Proponents cite the G7 Hiroshima AI Process as evidence that Japan can shape global norms without domestic mandates. Critics counter that voluntary compliance is insufficient to address systemic risks like bias, misinformation, and privacy violations at scale.

**How does this affect companies operating across all three markets?**

Companies must now maintain parallel compliance frameworks. At minimum, this means implementing China's mandatory content labelling and security assessments, meeting South Korea's risk-assessment and watermarking requirements, and demonstrating alignment with Japan's voluntary guidelines (which, while not legally required, are increasingly expected by Japanese partners and government procurement processes). The cost and complexity of multi-jurisdictional compliance is rising, and as [ASEAN shifts from guidelines to binding rules](/policy/asean-shifts-from-ai-guidelines-to-binding-rules), the patchwork is only growing.

---

## Closing Thoughts

East Asia's three-way regulatory experiment has no clear winner, at least not yet. China's muscular approach offers certainty and control but risks stifling the open-ended experimentation that drives AI breakthroughs. South Korea's framework law is ambitious in scope but untested in enforcement, with the real proof coming when regulators must decide whether to penalise major domestic champions like Samsung or Naver. Japan's soft-law gamble preserves maximum flexibility for its AI industry but leaves citizens and smaller businesses with few enforceable protections.

What is already clear is that the era of regulatory convergence in East Asia, if it ever truly existed, is over. Businesses, investors, and policymakers would do well to study all three models carefully, because the lessons emerging from this experiment will shape AI governance worldwide for years to come.